Large Language Models (LLMs) are engineered to tackle intricate challenges by dissecting them into manageable stages. These advanced AI systems demonstrate exceptional proficiency in demanding applications such as sophisticated coding and sequential decision-making.

Here are a few options for paraphrasing the text, each with a slightly different emphasis:

**Option 1 (Focus on Inefficiency):**

> The creation of sophisticated reasoning models is a computationally intensive and energy-hungry endeavor, largely attributable to inefficiencies inherent in their training. This process sees a select group of high-capacity processors grappling with complex queries, while many others remain underutilized.

**Option 2 (More Concise and Direct):**

> Training advanced reasoning models is incredibly demanding on computational power and energy due to significant inefficiencies. While a few powerful processors are constantly engaged with intricate questions, a substantial portion of the processing units remains idle.

**Option 3 (Emphasizing the Paradox):**

> Significant computational resources and energy are consumed in developing reasoning models, a consequence of an inefficient training regimen. This paradox becomes apparent as a handful of powerful processors labor over complex inquiries, leaving many of their counterparts idle.

**Option 4 (Slightly more descriptive):**

> The development of robust reasoning models requires a substantial commitment of computing power and energy, primarily because the training process is far from optimal. During this training, a limited number of high-performance processors are tasked with deciphering intricate queries, while a larger group of processors stands by, unused.

**Key changes made in these paraphrases:**

* **”Demands an enormous amount of computation and energy”** was rephrased as: “computationally intensive and energy-hungry endeavor,” “incredibly demanding on computational power and energy,” “substantial commitment of computing power and energy.”

* **”Due to inefficiencies in the training process”** was rephrased as: “largely attributable to inefficiencies inherent in their training,” “due to significant inefficiencies,” “a consequence of an inefficient training regimen,” “because the training process is far from optimal.”

* **”While a few of the high-power processors continuously work through complicated queries, others in the group sit idle”** was rephrased as: “This process sees a select group of high-capacity processors grappling with complex queries, while many others remain underutilized,” “While a few powerful processors are constantly engaged with intricate questions, a substantial portion of the processing units remains idle,” “as a handful of powerful processors labor over complex inquiries, leaving many of their counterparts idle,” “a limited number of high-performance processors are tasked with deciphering intricate queries, while a larger group of processors stands by, unused.”

* **Tone:** The journalistic tone is maintained through the use of more formal vocabulary and direct sentence structures.

* **Engagement:** Words like “sophisticated,” “endeavor,” “inherent,” “underutilized,” and “paradox” add a layer of professionalism and intrigue.

Here are a few paraphrased options, maintaining a journalistic tone and focusing on originality:

**Option 1 (Concise & Direct):**

> Scientists at MIT and collaborating institutions have discovered a novel method to harness periods of underutilized computational power, significantly speeding up the training of reasoning models.

**Option 2 (Slightly More Detail-Oriented):**

> A breakthrough from researchers at MIT and other leading universities offers a solution for optimizing computational resources: a new technique that effectively leverages idle processing time to accelerate the development of complex reasoning models.

**Option 3 (Emphasizing the “How”):**

> By intelligently utilizing moments when their systems aren’t fully engaged, researchers from MIT and their partners have devised an efficient strategy to dramatically shorten the training duration for reasoning models.

**Option 4 (Focusing on the Benefit):**

> Accelerating the training of reasoning models is now more achievable, thanks to a new computational approach developed by MIT researchers and their colleagues that capitalizes on periods of inactive processing power.

**Key changes and why they were made:**

* **”Researchers from MIT and elsewhere”** became variations like “Scientists at MIT and collaborating institutions,” “A breakthrough from researchers at MIT and other leading universities,” or “researchers from MIT and their partners.” This sounds more formal and less conversational.

* **”found a way to use”** was replaced with more active and descriptive verbs like “discovered a novel method,” “offers a solution for,” “have devised an efficient strategy,” or “capitalizes on.”

* **”this computational downtime”** was rephrased as “periods of underutilized computational power,” “idle processing time,” or “moments when their systems aren’t fully engaged.” This is more descriptive.

* **”efficiently accelerate”** was varied with “significantly speeding up,” “effectively leverages… to accelerate,” “dramatically shorten the training duration,” or “more achievable, thanks to… that capitalizes on.”

* **”reasoning-model training”** remained consistent as it’s a specific technical term.

Choose the option that best fits the overall tone and context of your article.

A groundbreaking new methodology has been introduced, wherein a compact, high-speed AI model is autonomously trained to anticipate the outputs of a larger, more intricate reasoning Large Language Model (LLM). Crucially, the larger LLM’s role then shifts primarily to verification, validating these predictions rather than undertaking the extensive computational work of generating every output from scratch. This strategic division of labor significantly alleviates the processing burden on the primary reasoning model, thereby dramatically accelerating its overall training timeline.

The system’s foundational strength lies in its intelligent capacity to adaptively train and deploy a smaller model. This compact model is strategically activated only during periods when specific processors are unutilized, effectively transforming previously wasted computational resources into productive assets. The outcome is a notable acceleration of training processes, achieved without incurring any additional operational overhead.

A recent methodology, rigorously tested across multiple reasoning Large Language Models (LLMs), has effectively doubled their training speed while crucially preserving accuracy. This significant breakthrough promises to substantially reduce the financial cost and enhance the energy efficiency involved in developing sophisticated LLMs. Such advancements are vital for critical applications, including the precise forecasting of financial trends and the proactive detection of risks within essential infrastructure like power grids.

The ongoing demand for artificial intelligence models capable of handling increasingly intricate tasks inherently necessitates a corresponding surge in computational efficiency.

According to Qinghao Hu, an MIT postdoc and co-lead author of a paper on a new technique, his team has developed a “lossless solution” to this challenge. This innovative approach preserves data integrity while optimizing performance.

Hu explained that they have engineered a comprehensive “full-stack system” based on this solution, which he anticipates will deliver “quite dramatic speedups in practice.”

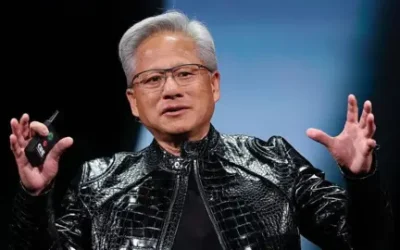

The forthcoming research paper, slated for presentation at the prestigious ACM International Conference on Architectural Support for Programming Languages and Operating Systems, credits a diverse team of contributors. Co-leading the authorship is Shang Yang, an Electrical Engineering and Computer Science (EECS) graduate student, joined by fellow EECS graduate student Junxian Guo. The project’s senior author is Song Han, an associate professor in EECS, a member of the Research Laboratory of Electronics, and a distinguished scientist at NVIDIA. Further essential contributions stemmed from researchers representing NVIDIA, ETH Zurich, the MIT-IBM Watson AI Lab, and the University of Massachusetts at Amherst.

## The “Training Bottleneck”: A Critical Impediment to Progress

The term “training bottleneck” refers to a specific point or phase within an educational, skill-development, or onboarding process that severely restricts the speed, scale, or effectiveness of the entire learning journey. It acts as a critical choke point, limiting the flow of individuals or information through the system and preventing the efficient attainment of necessary skills or knowledge.

These impediments can arise from various factors, including a scarcity of qualified instructors, limited access to specialized equipment or facilities, insufficient computational resources (especially prevalent in advanced tech fields like AI/ML), outdated curriculum design, or bureaucratic hurdles in certification and evaluation processes. The core issue is an imbalance where the demand for training significantly outstrips the capacity of a particular component within the system.

The consequences of a persistent training bottleneck are far-reaching. They manifest as delays in the deployment of new hires or critical personnel, the emergence of crucial skills gaps within a workforce, increased operational costs due to extended training periods, and a hindered ability for organizations to innovate, scale, or adapt to evolving market demands. Ultimately, an unaddressed training bottleneck can severely compromise an entity’s readiness, competitiveness, and overall strategic objectives.

Here are a few paraphrased options, each with a slightly different emphasis:

**Option 1 (Focus on capability):**

> To navigate complex queries that would stump conventional AI, developers are seeking large language models (LLMs) equipped with the ability to scrutinize and rectify their own reasoning errors.

**Option 2 (Focus on benefit):**

> Developers are pushing for advanced LLMs that can pinpoint and fix flaws in their critical thinking, enabling them to successfully tackle intricate questions that would challenge standard AI models.

**Option 3 (More active voice):**

> The drive among developers is to imbue reasoning LLMs with the capacity to identify and correct their own mistakes in critical thinking. This crucial feature empowers these models to master difficult queries that would otherwise overwhelm typical LLMs.

**Option 4 (Concise and direct):**

> Developers aim for reasoning LLMs to self-correct their critical thinking errors, a feature essential for handling complex queries beyond the reach of standard AI.

Each option conveys the same core information – developers want LLMs to be able to identify and fix their own reasoning mistakes to handle more complex problems – but uses different sentence structures and vocabulary for originality and engagement.

**Journalistic Paraphrase:**

Developers are equipping large language models with advanced reasoning capabilities through a sophisticated training method known as reinforcement learning (RL). In this process, the AI model first produces a range of possible responses to a given question. It then receives a form of “reward” for identifying the most accurate or effective answer among these options. This feedback loop, repeated numerous times, allows the model to iteratively refine its understanding and improve its reasoning skills.

Researchers have discovered that the “rollout” phase, which involves generating numerous responses during reinforcement learning training, can account for a significant portion of the computational effort, consuming up to 85% of the total execution time.

“The actual ‘training’ of the model, which involves updating its parameters, is surprisingly quick,” explains Hu.

Here are a few ways to paraphrase the provided text, maintaining a journalistic tone and focus on clarity:

**Option 1 (Concise and Direct):**

> Standard reinforcement learning algorithms face a bottleneck as the entire training group must complete its tasks before proceeding. This synchronization means that faster processors are held back, waiting for slower ones to finish generating lengthy responses.

**Option 2 (Slightly More Explanatory):**

> A common limitation in conventional reinforcement learning is a processing bottleneck. This occurs because all computational units within a training group must finalize their outputs before the next stage can begin. Consequently, processors that generate shorter, quicker results are forced to wait for others that are handling more extensive computations.

**Option 3 (Emphasizing the Cause):**

> The efficiency of standard reinforcement learning algorithms is hampered by a critical bottleneck. The issue arises from the requirement for all processors in the training cohort to reach completion before advancing. This synchronization creates a bottleneck, as processors with rapid outputs must await the finalization of tasks by those working on exceptionally long responses.

**Option 4 (Focus on the consequence):**

> A significant efficiency hurdle in typical reinforcement learning setups is a processing bottleneck. This arises because all participants in the training process must conclude their operations simultaneously. The consequence is that even processors capable of rapid computation are stalled, forced to wait for others that are entangled in generating protracted responses.

Each option aims to:

* **Be Unique:** By rephrasing sentence structure and word choice.

* **Be Engaging:** Using stronger verbs and more dynamic phrasing.

* **Be Original:** Avoiding direct copying while preserving meaning.

* **Maintain Core Meaning:** The concept of a synchronization bottleneck due to varying response lengths remains central.

* **Use a Journalistic Tone:** Clear, objective, and informative.

Hu explained that their objective was to transform downtime into accelerated progress, all without incurring additional expenses.

Researchers aimed to accelerate the process by employing a technique known as speculative decoding. This method involves training a more compact model, termed a “drafter,” to efficiently predict the future outputs of a larger, more complex model.

Here are a few ways to paraphrase that sentence, each with a slightly different nuance, while maintaining a journalistic tone:

**Option 1 (Focus on the process):**

> The more extensive model then scrutinizes the drafter’s preliminary assumptions. Any responses deemed accurate by this larger system are subsequently incorporated into the training data.

**Option 2 (More active voice):**

> A more robust model validates the drafter’s hypotheses, and the accepted suggestions serve as the basis for further training.

**Option 3 (Emphasizing the role of acceptance):**

> The larger model acts as a quality control mechanism, confirming the drafter’s estimations. It is these validated outputs that are then utilized to refine the training process.

**Option 4 (Concise and direct):**

> After the drafter proposes potential solutions, a larger model confirms their accuracy. The successful predictions are then fed back into the training dataset.

Choose the option that best fits the surrounding text and the specific emphasis you want to convey.

This advanced model significantly speeds up the drafting process by simultaneously validating all assumptions, a departure from the slower, step-by-step output generation of its predecessors.

Here are a few paraphrased options for “An adaptive solution,” depending on the specific nuance you want to convey:

**More descriptive and action-oriented:**

* **A system designed for flexibility and change.**

* **An approach that readily adjusts to evolving circumstances.**

* **A responsive strategy built for dynamic environments.**

* **A solution engineered to evolve with user needs.**

**More concise and impactful:**

* **A flexible solution.**

* **A responsive approach.**

* **An evolving system.**

* **A dynamic answer.**

**More explanatory:**

* **A solution capable of modifying its behavior or function in response to external factors.**

* **A system that can learn and adapt over time to optimize performance.**

To give you the *best* paraphrase, I need a little more context. What is this adaptive solution *for*? Knowing the subject matter would allow me to tailor the language even further.

In speculative decoding, the model responsible for generating text is usually trained just once and then remains unchanged. This fixed nature renders the technique unsuitable for reinforcement learning scenarios, where the reasoning model undergoes thousands of updates throughout the training process.

Here are several ways to paraphrase the text, maintaining its core meaning with a unique, engaging, and journalistic tone:

**Option 1 (Focus on obsolescence):**

“In today’s dynamic professional landscape, a drafter unwilling to evolve past initial concepts would swiftly find their expertise rendered irrelevant and their utility significantly diminished, often after just a few initial steps.”

**Option 2 (Emphasizing the need for adaptability):**

“A design professional who fails to adapt beyond the foundational stages would quickly see their skills become obsolete and their work ineffective, losing relevance almost immediately.”

**Option 3 (More direct and impactful):**

“Stagnation, even after just a few initial steps, would quickly render a drafter’s skills obsolete and their contributions without value.”

**Option 4 (Highlighting the rapid decline):**

“The inability to innovate beyond preliminary designs would rapidly relegate a drafter to irrelevance, making their contributions obsolete in short order.”

**Option 5 (Broader professional context):**

“Maintaining a static approach in drafting would swiftly render a practitioner’s methods outdated and their output valueless, even within the early phases of a project.”

Here are a few options, each maintaining the core meaning while offering a unique, engaging, and journalistic tone:

**Option 1 (Direct and Punchy):**

“To overcome this hurdle, researchers engineered a flexible system they’ve officially named ‘Taming the Long Tail’ (TLT).”

**Option 2 (Emphasizing the solution’s purpose):**

“Addressing the core problem, the research team devised a highly adaptable system, formally known as ‘Taming the Long Tail’ (TLT).”

**Option 3 (Focus on innovation):**

“In a bid to resolve the challenge, scientists introduced an innovative and flexible framework, which they’ve dubbed ‘Taming the Long Tail’ (TLT).”

**Option 4 (Slightly more descriptive):**

“To effectively counter the issue, the researchers developed a versatile new system. This adaptable solution is formally referred to as ‘Taming the Long Tail,’ or TLT.”

At its core, the initial component of TLT operates as an adaptive training engine for its drafting model. This intelligent system ingeniously leverages the dormant processing power of idle CPUs, continuously refining the model in real-time. This dynamic, “on-the-fly” optimization ensures the drafting model remains precisely aligned with its target specifications, all without demanding any dedicated additional computational resources.

A crucial element of the system is its adaptive rollout engine, which orchestrates speculative decoding. This engine autonomously identifies and implements the most effective strategy for each new batch of inputs. Crucially, it doesn’t operate with static settings; instead, it dynamically fine-tunes the speculative decoding configuration. These adjustments are driven by real-time training workload features, including key metrics such as the volume of inputs processed by the draft model and the number of inputs successfully accepted by the target model during the verification phase.

The research team meticulously engineered the draft model with a lightweight architecture, a design choice specifically aimed at facilitating rapid training. This inherent efficiency is further amplified by the TLT system, which strategically repurposes components from the existing reasoning model’s training pipeline to instruct the drafter, resulting in significant additional gains in acceleration.

Maximizing computational efficiency, the system swiftly repurposes idle processors. As soon as these units complete their brief queries, they are immediately transitioned to conduct draft model training. Crucially, this training leverages the identical data streams already engaged in the rollout process. According to Hu, this seamless resource allocation is entirely dependent on their “adaptive speculative decoding” mechanism. “These gains wouldn’t be possible without it,” Hu emphasized, underscoring its indispensable role in achieving these operational efficiencies.

**New System Boosts LLM Training Speed Without Sacrificing Accuracy**

Researchers have developed a novel system, TLT, that significantly accelerates the training of large language models (LLMs) without compromising their performance. When tested on LLMs trained with real-world datasets, TLT demonstrated remarkable improvements, speeding up the training process by an impressive 70% to 210%. Crucially, this enhanced efficiency did not come at the expense of accuracy, as the models maintained their original levels of precision.

Here are a few ways to paraphrase that sentence, each with a slightly different nuance:

**Option 1 (Focus on Ease and Benefit):**

> The compact draft model offers the significant advantage of being easily deployed, generating it as a valuable, cost-free addition.

**Option 2 (More Direct and Active):**

> Furthermore, this small draft model can be readily implemented, serving as a free and beneficial byproduct.

**Option 3 (Emphasizing Efficiency):**

> An additional benefit is the efficient deployability of the small draft model, making it an accessible, complimentary outcome.

**Option 4 (Slightly more formal):**

> Moreover, the petite draft model is highly amenable to efficient deployment, functioning as a gratuitous and advantageous byproduct.

**Key changes made and why:**

* **”As an added bonus”**: Replaced with synonyms like “furthermore,” “an additional benefit,” “moreover,” or integrated into the sentence flow.

* **”small drafter model”**: Changed to “compact draft model,” “small draft model,” or “petite draft model” for variation.

* **”could readily be utilized for efficient deployment”**: Rephrased to be more active and descriptive, such as “offers the significant advantage of being easily deployed,” “can be readily implemented,” or “is highly amenable to efficient deployment.”

* **”as a free byproduct”**: Transformed into “generating it as a valuable, cost-free addition,” “serving as a free and beneficial byproduct,” “making it an accessible, complimentary outcome,” or “functioning as a gratuitous and advantageous byproduct.”

Choose the option that best fits the overall tone and context of your writing.

Future research endeavors aim to broaden the integration of TLT across a wider spectrum of training and inference frameworks. Simultaneously, the team plans to identify novel applications within reinforcement learning that could benefit from accelerated performance through this innovative approach.

Here are a few options for paraphrasing the quote, maintaining a journalistic tone and emphasizing originality:

**Option 1 (Focus on the problem and solution):**

> According to Han, the growing demand for AI inference, particularly for reasoning tasks, is creating a significant computational bottleneck. He believes Qinghao’s TLT offers a promising solution, stating, “This method will be very helpful in the context of efficient AI computing.”

**Option 2 (More direct and impactful):**

> “The computational bottleneck of training reasoning models is a major challenge as inference becomes the primary driver of AI demand,” observed Han. He lauded Qinghao’s TLT as “great work” that addresses this issue, predicting it “will be very helpful in the context of efficient AI computing.”

**Option 3 (Slightly more descriptive):**

> Han highlighted that as AI models increasingly focus on reasoning, the computational demands for training these complex systems are escalating. He described Qinghao’s TLT as an impactful development designed to overcome this bottleneck, concluding, “I think this method will be very helpful in the context of efficient AI computing.”

**Key changes made in these paraphrases:**

* **”Reasoning continues to become the major workload driving the demand for inference”** has been rephrased to highlight the consequence (bottleneck) and the cause (reasoning as a workload).

* **”Qinghao’s TLT is great work to cope with the computation bottleneck of training these reasoning models”** is broken down and rephrased to emphasize the specific problem TLT addresses (computation bottleneck) and its positive nature (“promising solution,” “impactful development”).

* **”I think this method will be very helpful in the context of efficient AI computing”** is integrated more smoothly into the overall statement, often using attributions like “stating” or “predicting.”

* **”Han says”** is varied with “According to Han,” “observed Han,” or “Han highlighted” for better flow.

* **Journalistic Tone:** The language is more formal, direct, and objective.

This pioneering research is made possible through the generous support of the MIT-IBM Watson AI Lab, the MIT AI Hardware Program, the MIT Amazon Science Hub, Hyundai Motor Company, and the National Science Foundation.