Across industries ranging from entertainment to healthcare, 3D printing empowers designers and engineers to swiftly develop functional prototypes. This innovative technology allows for the rapid creation of everything from detailed movie props to critical medical devices. For these fabricated objects to meet stringent performance expectations, however, the accuracy of digital print previews is absolutely critical, ensuring users can trust the final output.

While current 3D printing software excels at generating functional previews, it often falls short in accurately representing aesthetics. This critical oversight frequently leads to a disconnect between a user’s visual expectations and the final printed object, which may exhibit unintended variances in color, texture, or shading. The inevitable consequence is a frustrating and costly cycle of multiple reprints, squandering valuable time, effort, and raw materials.

Researchers from MIT and collaborating institutions have unveiled an innovative, user-friendly preview tool designed to help creators vividly visualize the final appearance of fabricated objects. The system uniquely prioritizes aesthetic fidelity, allowing users to accurately envision their designs long before they materialize.

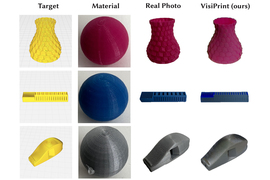

To initiate the process, users provide two key pieces of information: a digital representation of their 3D object – often a screenshot directly from their CAD or slicing software – alongside a single image depicting the chosen print material. Leveraging these inputs, the intelligent system then automatically generates a high-fidelity rendering, offering a realistic preview of how the final, fabricated 3D object is likely to appear.

The VisiPrint system, an advanced artificial intelligence solution, is engineered to significantly enhance 3D printing processes. Designed for broad compatibility, it seamlessly integrates with diverse 3D-printing software and supports an extensive range of materials. Beyond mere color assessment, VisiPrint conducts sophisticated analyses of crucial aesthetic elements, meticulously evaluating factors such as gloss, translucency, and the subtle yet impactful influence of the fabrication process on an object’s final visual appearance.

These advanced aesthetic preview capabilities hold significant potential for enhancing visual accuracy across multiple professional fields. For example, in dentistry, clinicians could leverage them to ensure temporary crowns and bridges flawlessly integrate with a patient’s natural dentition. Similarly, in architecture, these tools would empower designers to meticulously assess the visual impact and overall harmony of their conceptual models.

Despite its innovative potential, 3D printing faces a substantial challenge in sustainability. Studies reveal that a staggering **one-third of all materials used in 3D printing can end up as landfill waste**, primarily due to the repeated production of discarded prototypes during the design iteration process.

Addressing this significant environmental footprint requires a fundamental shift, according to Maxine Perroni-Scharf, an electrical engineering and computer science (EECS) graduate student and lead author of a paper on the VisiPrint technology. Perroni-Scharf explains that to make 3D printing more sustainable, the objective is to **minimize the number of attempts needed to perfect a prototype**. This means designers should no longer be compelled to experiment with every available printing material before finalizing a design.

The research paper credits a diverse team of collaborators. This extensive list includes Faraz Faruqi, a fellow graduate student in Electrical Engineering and Computer Science (EECS) at MIT, and MIT undergraduate Raul Hernandez. Further contributions come from SooYeon Ahn, a graduate student at the Gwangju Institute of Science and Technology, and Szymon Rusinkiewicz, a professor of computer science at Princeton University. Key figures from MIT’s faculty include William Freeman, the Thomas and Gerd Perkins Professor of EECS and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL). The work’s senior author is Stefanie Mueller, an associate professor of EECS and Mechanical Engineering at MIT, also affiliated with CSAIL. The study is scheduled for presentation at the forthcoming ACM CHI Conference on Human Factors in Computing Systems.

Here are a few options, each with a slightly different nuance, while maintaining the core meaning and journalistic tone:

**Option 1 (Focus on precision and intent):**

“The meticulous pursuit of visual design where every element is precisely calibrated to achieve a harmonious and authentic aesthetic, faithfully reflecting its intended purpose and vision.”

**Option 2 (Focus on authenticity and discernment):**

“A commitment to discerning visual integrity, ensuring that stylistic choices are not merely pleasing but accurately embody the essence and intended message, resulting in a flawless and authentic appearance.”

**Option 3 (More concise, highlighting craftsmanship):**

“Crafting visual appeal with unwavering exactitude. This approach emphasizes precision in design, ensuring that aesthetics are not just beautiful, but perfectly aligned with underlying principles and objectives.”

A recent investigation primarily focused on Fused Deposition Modeling (FDM), which stands as the predominant method in contemporary 3D printing. This widely adopted additive manufacturing technique operates by heating a filament of chosen print material to its melting point. The molten substance is then precisely extruded through a fine nozzle, meticulously building the desired object one successive layer at a time.

Creating precise visual representations of a material’s final look presents a significant hurdle. This is because the actual manufacturing process, involving melting and extrusion, can alter the material’s inherent color and texture. Furthermore, the thickness of each applied layer and the specific route taken by the fabrication nozzle during production all contribute to variations in the aesthetic outcome.

VisiPrint employs a dual-AI system designed to tackle these specific obstacles.

Here are a few options for paraphrasing the provided text, each with a slightly different emphasis, while maintaining a journalistic tone:

**Option 1 (Focus on functionality):**

> VisiPrint generates a preview of a 3D print by analyzing two key pieces of information: a digital snapshot of the design from the user’s 3D printing software (often referred to as “slicer” software), and an image representing the print material itself. This material image can be sourced from the internet or captured directly from a physical print.

**Option 2 (More concise):**

> To create its preview, VisiPrint utilizes two inputs: a screenshot of the 3D design from user-provided slicer software, and an image of the intended print material. This material image can be either digitally sourced or captured from an existing print.

**Option 3 (Emphasizing the origin of inputs):**

> The VisiPrint preview system operates by processing two distinct inputs. First, it takes a screenshot from the user’s 3D printing software, known as slicer software. Second, it incorporates an image of the print material, which can be obtained from an online repository or a physical printed sample.

**Option 4 (Slightly more active voice):**

> VisiPrint bases its preview on two sources of data: a screenshot capturing the digital design from the user’s 3D printing software (or slicer), and an image representing the print material. Users can provide this material image by selecting it from an online resource or by photographing a printed sample.

Each of these options aims to be unique, engaging, and original while conveying the same factual information in a clear, journalistic style.

A computer vision model analyzes the material sample, identifying key visual characteristics that define the object’s look.

Here are a few options for paraphrasing the provided text, maintaining a clear, journalistic tone:

**Option 1 (Focus on AI’s role):**

> This information is then fed into a generative artificial intelligence model. The AI calculates the precise geometry and internal structure of the object, simultaneously integrating the specific “slicing” path the extrusion nozzle will trace as it builds each layer.

**Option 2 (More active voice, emphasizes computation):**

> The system supplies these details to a generative AI, which then computes the object’s complete geometry and structure. Crucially, the AI also factors in the “slicing” pattern—the predetermined route the nozzle will follow to deposit each successive layer.

**Option 3 (Concise and direct):**

> A generative AI model processes this data to determine the object’s geometry and structure, while also mapping out the “slicing” pattern the nozzle will use for layer-by-layer extrusion.

**Option 4 (Slightly more descriptive of the process):**

> The input features are used by a generative AI to computationally determine the object’s form and construction. This process includes defining the precise “slicing” trajectory the extrusion nozzle will adhere to as it fabricates the item, layer by layer.

Each option offers a slightly different emphasis while conveying the same essential information: the AI’s dual role in calculating object design and defining the printing path.

Researchers have developed a novel approach centered on a specialized conditioning technique. This method meticulously fine-tunes the internal mechanisms of their model, effectively directing it to adhere to the necessary slicing patterns and comply with the inherent limitations of 3D printing.

This advanced conditioning technique employs a depth map to meticulously capture an object’s form and surface illumination. Simultaneously, an edge map is generated, highlighting the intricate internal contours and essential structural lines.

“Achieving the ideal combination of these two elements is crucial to avoid flawed geometry or an inefficient slicing pattern. Meticulous care was taken to ensure they were integrated correctly,” explained Perroni-Scharf.

Here are a few paraphrased options for “a user-focused system,” maintaining a clear, journalistic tone and offering slightly different nuances:

**Option 1 (Emphasizing the User’s Centrality):**

> A system designed with the end-user at its core.

**Option 2 (Highlighting the Benefit to the User):**

> A system engineered to prioritize and meet user needs.

**Option 3 (Focusing on the Design Philosophy):**

> An approach to system development that places the user experience paramount.

**Option 4 (More active and descriptive):**

> A system built from the ground up to serve and empower its users.

**Option 5 (Concise and direct):**

> A user-centric system.

The best option will depend on the specific context of your writing. Consider what aspect of “user-focused” you want to emphasize.

The team has developed a user-friendly interface, allowing individuals to effortlessly upload necessary images and review their appearance beforehand.

For experienced creators, the VisiPrint interface unlocks a deeper level of control, allowing them to fine-tune various parameters, including how specific colors impact the final output.

Ultimately, the aesthetic preview, exemplified by systems like VisiPrint, is designed to enhance the functional insights generated by slicer software. Its purpose is purely visual, as it does not evaluate critical factors such as printability, mechanical integrity, or the potential for manufacturing failure.

In a comprehensive evaluation, researchers conducted a user study to assess VisiPrint’s performance, directly comparing the system against alternative approaches. The findings revealed a striking consensus: an overwhelming majority of participants lauded VisiPrint for its superior overall visual appeal. Crucially, they also highlighted its remarkably enhanced textural similarity, noting how closely it replicated the tactile qualities of actual printed objects.

The VisiPrint preview process demonstrated exceptional efficiency, averaging approximately one minute per task. This speed significantly outpaced rival systems, performing more than twice as fast as any competing method.

VisiPrint has demonstrated exceptional capabilities, particularly when benchmarked against other artificial intelligence interfaces. She noted that general AI models often falter when processing screenshots, potentially introducing inaccuracies such as arbitrary shape changes or incorrect slicing patterns. This vulnerability, she explained, stems from their lack of specific, direct conditioning – a crucial advantage VisiPrint evidently possesses.

Looking ahead, researchers are poised to enhance model preview fidelity by tackling persistent rendering artifacts that emerge during the display of extremely intricate designs. Further expanding user control, they plan to integrate features enabling optimization of diverse printing parameters, extending capabilities far beyond merely selecting material color.

“It is imperative that we critically examine our object fabrication methods and continually strive to develop techniques that significantly reduce waste,” states Perroni-Scharf. He identifies the “marriage of AI with the physical making process” as a particularly “exciting area of future work” crucial for advancing sustainable production.

Patrick Baudisch, a computer science professor at the Hasso Plattner Institute, notes that the “what you see is what you get” (WYSIWYG) principle was a pivotal factor in the rise of desktop publishing in the 1980s, enabling users to achieve desired outcomes on their initial attempt. Baudisch suggests that adopting a similar WYSIWYG approach for 3D printing is now timely, and he views VisiPrint as a significant advancement toward this goal.

This research received partial funding from both the MIT Morningside Academy for Design Fellowship and the MIT MathWorks Fellowship.