Effectively managing a power grid presents an immense and intricate challenge, often likened to solving a dynamic, gargantuan puzzle with countless constantly shifting pieces.

Grid operators bear the immense and intricate responsibility of orchestrating the nation’s power flow. Their critical mandate is to ensure the precise volume of electricity reaches designated areas at the exact moment it’s needed. This complex balancing act demands meticulous cost-efficiency while rigorously safeguarding the physical infrastructure from overload. Far from a static challenge, this isn’t a one-time calculation; it’s a relentless, real-time computational feat that must be solved continuously and rapidly, adapting moment-by-moment to the dynamic and ever-shifting demands for power.

MIT researchers have unveiled an innovative problem-solving tool designed to tackle complex, recurring challenges. This sophisticated system is capable of identifying the optimal solution at a significantly accelerated pace compared to conventional methods. Crucially, it rigorously ensures that the chosen solution adheres to all system constraints. For instance, within a power grid, these critical limitations could encompass generator output capacities and transmission line thresholds.

A groundbreaking new tool is redefining problem-solving by seamlessly integrating a vital feasibility assessment directly into its advanced machine learning architecture. This powerful model, meticulously trained to tackle complex challenges, begins by generating an initial prediction.

What sets this innovation apart is its sophisticated iterative refinement process. Taking the model’s initial forecast as its baseline, the system systematically hones the proposed solution. Through continuous optimization, it progressively refines the possibilities, ensuring it doesn’t just offer a prediction, but actively sculpts the answer until it identifies the most effective and practically achievable outcome possible.

A groundbreaking system developed at MIT is poised to dramatically accelerate the resolution of complex problems, operating several times faster than traditional solvers while offering robust guarantees of success.

Crucially, for exceptionally intricate challenges, this technique can even yield superior outcomes compared to well-established methodologies. Its capabilities also extend beyond pure machine learning approaches. While ML algorithms are swift, they frequently fall short in consistently identifying feasible solutions – a pitfall the MIT system effectively overcomes.

While initially designed to optimize electric grid power production, this innovative tool demonstrates far-reaching potential. It is poised to tackle a diverse range of complex challenges, extending its utility to areas such as new product development, strategic investment portfolio management, and optimizing production to precisely meet consumer demand.

Addressing today’s most intricate technical challenges demands a sophisticated integration of tools from machine learning, optimization, and electrical engineering, according to Priya Donti, the Silverman Family Career Development Professor in MIT’s Department of Electrical Engineering and Computer Science (EECS) and a principal investigator at the Laboratory for Information and Decision Systems (LIDS).

Donti explains that the goal is to develop methods that strike the ideal balance, delivering substantial value to a specific domain while rigorously satisfying its inherent requirements. “You have to look at the needs of the application,” she emphasizes, “and design methods in a way that actually fulfills those needs.”

An innovative new tool, dubbed FSNet, is the subject of a forthcoming open-access paper co-authored by senior researcher Donti and lead author Hoang Nguyen, an EECS graduate student. This significant research is slated for presentation at the upcoming Conference on Neural Information Processing Systems.

Instead of relying on single methodologies, a more dynamic and effective strategy is emerging: the deliberate integration of diverse approaches. This shift aims to foster synergistic outcomes, providing more comprehensive and robust solutions to complex challenges by drawing strength from multiple perspectives and techniques.

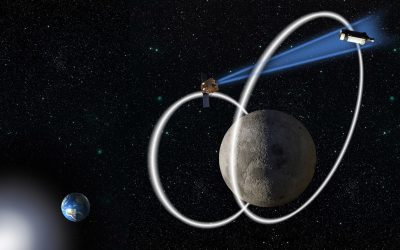

Optimizing power flow within an electric grid poses a complex and formidable challenge, one that grid operators are finding increasingly difficult to resolve with the necessary speed.

Integrating a greater share of renewable energy into the power grid introduces distinct operational challenges for those at the helm, Donti explains. Grid operators must not only manage power generation that fluctuates from moment to moment but also coordinate an escalating number of distributed devices.

Grid operators routinely depend on established computational methods, highly valued for their mathematical assurances that optimal solutions rigorously adhere to all operational constraints. However, this precision often comes at a significant cost: when confronted with particularly intricate or extensive problems, these very tools can require substantial processing times, frequently extending from several hours to even multiple days before delivering a final, viable outcome.

Deep-learning models are celebrated for their ability to swiftly tackle highly complex problems. However, this impressive speed often comes with a significant caveat: their proposed solutions may inadvertently disregard critical operational constraints. In high-stakes environments, such as power grid management, this oversight could translate into dangerous scenarios like unsafe voltage levels or even a complete system blackout.

According to Nguyen, machine-learning models frequently falter in meeting all specified constraints, a challenge he attributes to the pervasive errors that emerge throughout their training process.

Pioneering a new approach, FSNet’s developers crafted a system that masterfully combines the optimal features of two distinct methodologies. This innovative integration culminates in a highly effective, two-step framework for addressing complex problems.

Here are several ways to paraphrase “Focusing on feasibility” in a unique, engaging, and original journalistic tone, while maintaining the core meaning:

1. **Emphasis is now squarely on the operational viability of the plan.**

2. **The primary objective involves a critical assessment of the project’s practicability.**

3. **Attention has shifted to rigorously evaluating whether the initiative is genuinely achievable.**

4. **Efforts are being concentrated on determining the realistic potential for execution.**

5. **The core discussion now centers on the workability and logistical soundness of the proposal.**

The process begins with a neural network predicting a solution to the optimization problem. These sophisticated deep learning models, which draw only a loose conceptual inspiration from the biological neurons of the human brain, are particularly renowned for their exceptional ability to identify and interpret intricate patterns within vast datasets.

Subsequently, FSNet engages an integrated traditional solver designed for a crucial feasibility-seeking phase. This advanced optimization algorithm systematically refines the initial prediction through an iterative process, rigorously ensuring the ultimate solution strictly adheres to all defined constraints and remains fully compliant.

Rooted in a precise mathematical model, the feasibility-seeking process offers a critical guarantee: any resulting solution is inherently deployable.

According to Hoang, this particular step holds critical importance, as FSNet is designed to deliver the robust guarantees essential for practical real-world applications.

Researchers have engineered FSNet, an innovative system designed to simultaneously address both equality and inequality constraints. This integrated approach offers a distinct operational advantage, significantly simplifying its implementation compared to existing methodologies. Conventional solutions often demand customized neural network configurations or the laborious task of resolving each constraint type individually, complexities that FSNet effectively bypasses with its unified framework.

Users can seamlessly deploy and experiment with a diverse range of optimization solvers, Donti highlighted.

Researchers have reportedly unlocked a significantly more effective technique for neural networks to solve complex optimization problems. This breakthrough stems from a fundamental rethinking of how these AI systems typically approach such intricate challenges, one expert explained.

In rigorous testing, FSNet was benchmarked against both traditional algorithmic solvers and emerging pure machine-learning systems. Applied to a diverse array of complex problems, notably power grid optimization, the system delivered groundbreaking performance. It dramatically reduced solution times by orders of magnitude compared to established baseline approaches, while crucially maintaining full adherence to all specified problem constraints.

Furthermore, FSNet demonstrated its prowess by delivering superior solutions to some of the most formidable challenges.

The findings, while initially unexpected, ultimately proved logical, Donti explained. He clarified that their neural network independently uncovers intricate patterns and underlying structures within data—insights that the original optimization solver was never designed to detect or leverage.

Looking ahead, researchers have outlined key advancements for FSNet. Their future roadmap focuses on three primary areas: optimizing the system’s memory footprint, integrating more efficient optimization algorithms, and enhancing its scalability to tackle increasingly complex, real-world challenges.

Kyri Baker, an associate professor at the University of Colorado Boulder, underscores a crucial principle in tackling complex optimization challenges: the practicality of a solution must take precedence over its theoretical perfection. Baker, who was not involved in the referenced work, elaborated on the severe implications for physical systems like power grids, where a seemingly optimal strategy holds no value if it cannot be realistically implemented.

According to Baker, this latest research represents a vital advancement by equipping deep-learning models with the capacity to generate predictions that inherently comply with specified constraints, and importantly, provides explicit guarantees that these constraints will be rigorously upheld.

A persistent hurdle in machine learning-based optimization has been ensuring the practical feasibility of its solutions. However, new research presents an innovative approach, seamlessly integrating end-to-end learning with a specialized, unrolled feasibility-seeking procedure. This advanced method actively works to minimize equality and inequality violations, significantly enhancing the real-world applicability of optimization outcomes.

Ferdinando Fioretto, an assistant professor at the University of Virginia who was not involved in the study, praised the development, remarking that the results are “very promising.” He added, “I look forward to see where this research will head.”