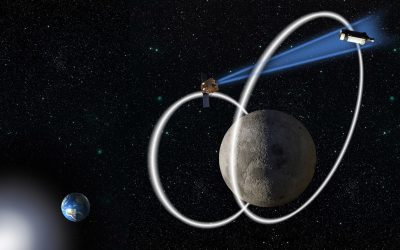

In the harrowing aftermath of a mine shaft collapse, an autonomous robot tasked with locating trapped workers faces an immediate and formidable challenge. As it navigates the treacherous, unstable subterranean environment, the robot must rapidly construct a detailed map of the disaster zone while simultaneously pinpointing its own precise location within that evolving map, all in real-time.

Scientists are actively developing advanced machine-learning models aimed at equipping robots to execute complex tasks by solely interpreting visual data from their integrated cameras. Despite this progress, a significant limitation currently hinders their real-world application: even the most sophisticated models can only process a handful of images concurrently. This presents a critical challenge for time-sensitive disaster relief efforts, where search-and-rescue robots must rapidly traverse expansive territories and analyze thousands of images to successfully complete their life-saving missions.

In a significant stride forward, MIT researchers have engineered an innovative system designed to overcome a persistent hurdle in 3D mapping. This new technology seamlessly blends cutting-edge artificial intelligence vision models with established classical computer vision techniques.

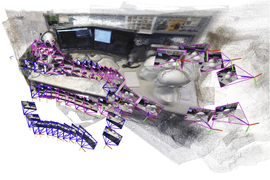

The result is a groundbreaking system capable of processing an unlimited array of images. It precisely generates intricate 3D maps of even highly complex environments, such as a bustling office corridor, delivering accurate results in mere seconds.

This AI-driven system meticulously reconstructs a complete 3D map of its environment. It achieves this by progressively generating and precisely aligning numerous smaller ‘submaps,’ which are then seamlessly stitched together. Crucially, throughout this process, the system also continuously tracks the robot’s exact position in real-time.

This innovative technique offers a significant departure from conventional methods, as it operates without the need for pre-calibrated cameras or the intervention of experts to fine-tune complex system configurations. This inherent simplicity, combined with the rapid production of high-quality 3D reconstructions, positions the approach for seamless and widespread scalability across diverse real-world applications.

The potential applications of this method extend significantly beyond assisting search-and-rescue robots with navigation. Its capabilities are also poised to enable advanced extended reality (XR) experiences for wearable devices, such as virtual reality headsets, and could empower industrial robots to rapidly locate and transport goods within warehouse environments.

For robots to successfully undertake more complex tasks, a deeper, more intricate understanding of their environment is essential. The challenge, however, lies in developing these sophisticated mapping capabilities without hindering their practical implementation.

According to Dominic Maggio, an MIT graduate student and lead author of a new paper on the method, his team has achieved a significant breakthrough. “We’ve shown that it is possible to generate an accurate 3D reconstruction in a matter of seconds with a tool that works out of the box,” Maggio stated, underscoring a rapid, readily deployable solution to advanced robotic perception.

The upcoming Conference on Neural Information Processing Systems will feature new research co-authored by Maggio, postdoc Hyungtae Lim, and senior author Luca Carlone. Carlone is an associate professor in MIT’s Department of Aeronautics and Astronautics (AeroAstro), a principal investigator in the Laboratory for Information and Decision Systems (LIDS), and director of the MIT SPARK Laboratory.

Here are several ways to paraphrase “Mapping out a solution,” maintaining a journalistic tone:

**Focusing on Strategy and Planning:**

* **Developing a strategic blueprint.**

* **Charting a course toward a definitive resolution.**

* **Formulating a comprehensive plan for resolution.**

* **Designing an effective strategy to address the challenge.**

* **Outlining a robust path forward.**

**Emphasizing the Process:**

* **Strategizing the way to a viable answer.**

* **Crafting a detailed approach to the problem.**

Choose the option that best fits the specific context and desired emphasis of your article.

For years, researchers have grappled with a core challenge in robotic navigation: Simultaneous Localization and Mapping (SLAM). This fundamental technology enables an autonomous robot to concurrently build a map of its surroundings while precisely determining its own position within that developing environment.

Traditional optimization techniques for this operation frequently falter in demanding environments or necessitate the prior calibration of a robot’s onboard cameras. To circumvent these considerable obstacles, researchers are increasingly deploying machine-learning models, which are trained to master the task by learning directly from data, effectively sidestepping these conventional limitations.

Despite their relative ease of implementation, even the most advanced models currently face a significant processing bottleneck. They are restricted to analyzing approximately 60 camera images at a time, a critical limitation that renders them impractical for sophisticated robotic systems. Such applications often demand rapid navigation through complex, dynamic environments, requiring the continuous, real-time processing of thousands of visual inputs.

Addressing a critical challenge, MIT researchers have engineered a novel system that foregoes mapping an entire scene in favor of generating smaller, localized “submaps.” This innovative method then seamlessly integrates these individual submaps to form a comprehensive overall 3D reconstruction. Although the underlying model processes only a limited number of images concurrently, this submap-stitching technique drastically accelerates the recreation of expansive environments.

Maggio initially perceived the solution as remarkably simple, but its inaugural test revealed a surprising lack of efficacy.

Driven by a search for answers, Maggio delved into computer vision research papers from the 1980s and 1990s. His analysis revealed a critical insight: fundamental errors in how machine-learning models process images were directly responsible for increasing the complexity of aligning submaps.

Traditionally, submaps are aligned through the straightforward application of rotations and translations. However, emerging modeling techniques are presenting a new hurdle: they can introduce subtle ambiguities and deformations within these submaps, severely complicating the alignment process.

Consider, for instance, a 3D submap depicting a section of a room where the geometry of the walls might be subtly bent or stretched. In such instances, the conventional approach of simply rotating and translating these distorted representations proves ineffective for achieving accurate registration.

Carlone underscored a critical requirement: ensuring all constituent submaps are deformed uniformly. This consistency, he explained, is paramount for their accurate and seamless alignment.

Moving beyond entrenched methodologies, a proactive shift towards greater adaptability is now taking precedence, signaling a new era of responsive strategies.

Drawing inspiration from classical computer vision principles, researchers have developed an adaptable mathematical technique designed to comprehensively model all deformations within submaps. This flexible method applies specific mathematical transformations to each submap, enabling precise alignment and effectively resolving inherent ambiguities.

Harnessing raw image data, a sophisticated system empowers robots with crucial spatial awareness. It meticulously constructs a detailed 3D model of the surrounding environment while simultaneously pinpointing the exact locations of its integrated cameras. This vital stream of information then enables the robot to precisely localize itself within that operational space.

According to Carlone, Dominic’s critical insight into seamlessly integrating learning-based approaches with traditional optimization methods made the subsequent implementation notably straightforward. He further observed that the resulting solution, due to its effectiveness and inherent simplicity, holds significant promise for a wide array of applications.

A breakthrough in 3D modeling has emerged, with researchers showcasing a system that achieves significantly faster and more precise 3D reconstructions than other methods. Crucially, this advanced performance comes without the necessity for specialized cameras or additional processing tools. The system remarkably allows for the generation of near-real-time 3D models of complex environments, such as the intricate interior of the MIT Chapel, simply by utilizing short videos captured on an ordinary cell phone.

Here are a few ways to paraphrase that statement, maintaining a clear, journalistic tone:

1. **”The 3D reconstructions achieved an impressive level of accuracy, with their average margin of error measuring less than 5 centimeters.”**

2. **”On average, the three-dimensional models demonstrated remarkable precision, consistently staying within a 5-centimeter error margin.”**

3. **”An average deviation of less than 5 centimeters highlighted the high fidelity of these 3D reconstructions.”**

4. **”The spatial accuracy of the 3D reconstructions was notably high, with the typical error registering below 5 centimeters.”**

Looking ahead, the research team aims to significantly enhance the reliability of their method, especially when confronted with highly intricate scenarios. A primary objective involves transitioning this refined technology to practical application, deploying it on real-world robots operating in demanding and unpredictable environments.

Carlone emphasizes that a profound understanding of traditional geometry is invaluable. He states that by deeply comprehending a model’s underlying mechanics, professionals can achieve significantly superior results and ensure robust scalability.

This research was supported by significant contributions from several international bodies, including the U.S. National Science Foundation, the U.S. Office of Naval Research, and South Korea’s National Research Foundation. It is important to note that Carlone completed this particular work prior to his current engagement as an Amazon Scholar, a position he holds while on sabbatical.