In 2025, a highly confidential meeting is slated to bring together a select assembly of the world’s most distinguished mathematicians. The exclusive session will reportedly focus on subjecting o4-mini, OpenAI’s newest large language model, to an intensive and expert evaluation.

Experts convened at the recent summit expressed considerable astonishment at the artificial intelligence model’s remarkable capacity to articulate intricate mathematical proofs. Its responses were noted for their striking resemblance to the discourse of a seasoned human mathematician.

Ken Ono, a professor of number theory at the University of Virginia, expressed considerable astonishment at the time regarding the models’ analytical capabilities. He characterized their reasoning as “unprecedented” and lauded it as reflective of genuine scientific methodology.

However, a critical question surfaces: Was the AI model genuinely the primary driver of the discovery, or was its contribution disproportionately lauded? And more pressingly, do we face the peril of uncritically embracing AI-generated proofs without truly understanding their underlying methodology or implications?

Here are several options for paraphrasing the text, maintaining a unique, engaging, and journalistic tone:

**Option 1 (Direct and concise):**

Ono conceded that the model possessed the capability to deliver persuasive, yet potentially erroneous, answers.

**Option 2 (Emphasizing the paradox):**

Despite its convincing outputs, the model could be generating factually incorrect information, Ono acknowledged.

**Option 3 (With a cautionary tone):**

Ono warned that the model, while producing highly plausible responses, might in fact be providing inaccurate data.

**Option 4 (Focusing on the risk):**

According to Ono, there’s a recognized risk that the model’s seemingly accurate conclusions could ultimately be flawed.

**Option 5 (Slightly more formal):**

Ono recognized the inherent possibility of the model yielding compelling results that are, nevertheless, incorrect.

According to Ono, the mere projection of authority can be a potent source of intimidation. He suggests that o4-mini has perfected this strategy, asserting that the entity has “mastered proof by intimidation” by consistently articulating its views with an undeniable air of certainty.

Here are a few paraphrased options, maintaining a journalistic tone and unique phrasing:

**Option 1 (Focus on historical shift):**

> Historically, a mathematician’s assurance and the clarity of their arguments served as reliable indicators of sound reasoning, a testament to the skill required to construct such proofs. However, this era has passed.

**Option 2 (More direct contrast):**

> In prior times, a confident presentation and a well-articulated mathematical argument were strong signals of validity, as only the most accomplished minds could craft such convincing logic. That paradigm has since shifted.

**Option 3 (Emphasizing the change in reliability):**

> The combination of a mathematician’s confidence and the apparent strength of their arguments once reliably signaled impeccable logic, a trait reserved for the field’s elite. This assumption is no longer a safe one.

**Option 4 (Concise and impactful):**

> Once, a mathematician’s confidence and the compelling nature of their arguments were trusted hallmarks of accuracy, as only the sharpest intellects could produce them. That is no longer the case.

Here are a few paraphrased options, each with a slightly different emphasis, while maintaining the core meaning and journalistic tone:

**Option 1 (Focus on the implication for AI):**

> According to renowned mathematician Terry Tao, artificial intelligence has overcome a significant hurdle: the ability to generate mathematical content devoid of the fundamental misunderstandings that would plague a human with weak mathematical skills. Tao, a Fields Medal laureate and UCLA professor, explained to Live Science that while a flawed mathematician would inevitably produce flawed mathematical writing, focusing on erroneous concepts, AI can now transcend this limitation.

**Option 2 (More direct and concise):**

> AI has bypassed a critical filter that would expose a mathematically inept individual, according to Fields Medal winner Terry Tao. The UCLA mathematician told Live Science that a poor understanding of mathematics inherently leads to poor mathematical writing, marked by misplaced emphasis. However, he noted that AI has now “broken that signal,” suggesting a capability beyond mere imitation.

**Option 3 (Slightly more explanatory):**

> Terry Tao, a celebrated mathematician and Fields Medal recipient from UCLA, has observed a profound shift in the capabilities of artificial intelligence. He explained to Live Science that AI has successfully navigated a challenge that would stump any human struggling with mathematics: producing accurate mathematical writing. Tao elaborated that a mathematician’s deficiencies would inevitably manifest in their written work, leading them to highlight incorrect aspects. AI, he stated, has now “broken that signal,” indicating its ability to avoid such errors.

**Option 4 (Emphasizing the “signal” metaphor):**

> The ability of AI to produce sound mathematical writing, even if its underlying mathematical grasp is imperfect, has bypassed a crucial “signal” of deficiency, according to UCLA mathematician Terry Tao. Winner of the prestigious Fields Medal, Tao told Live Science that a mathematician’s weaknesses would inevitably surface in their written work, causing them to misdirect focus. AI, he observed, has now overcome this inherent limitation.

As artificial intelligence rapidly advances, mathematicians are expressing growing concern. They fear that AI could inundate the field with sophisticated-looking mathematical proofs that, while appearing sound, contain subtle errors that would be challenging for human experts to identify.

AI-generated arguments could be mistakenly embraced due to their sophisticated and seemingly well-reasoned presentation, according to Tao.

Here are a few paraphrased options, maintaining a journalistic tone:

**Option 1 (Focus on the convincing nature):**

> According to [Name of Source, e.g., researcher Tao], artificial intelligence often excels at presenting information with an air of certainty, regardless of its accuracy. “The AI is much better at sounding like they have the right answer than actually getting it,” explained Tao, adding that these systems “will always look convincing.”

**Option 2 (More direct about the inaccuracy):**

> [Name of Source, e.g., Technology expert Tao] has cautioned that while artificial intelligence may appear authoritative, its pronouncements aren’t always correct. Tao stated, “The AI is much better at sounding like they have the right answer than actually getting it,” and noted that these responses “will always look convincing.”

**Option 3 (Concise and impactful):**

> The ability of AI to sound convincing often outstrips its actual accuracy, according to [Name of Source, e.g., analyst Tao]. “The AI is much better at sounding like they have the right answer than actually getting it,” Tao observed, emphasizing that AI-generated content “will always look convincing.”

**Key changes made:**

* **Attribution:** Clearly stated who said it.

* **Varied Verbs:** Used words like “explained,” “stated,” “cautioned,” and “observed” instead of just “said.”

* **Sentence Structure:** Reordered the clauses and phrases for a fresh flow.

* **Emphasis:** Highlighted the “convincing” aspect as a distinct point.

* **Journalistic Language:** Employed terms like “air of certainty,” “pronouncements,” and “AI-generated content.”

Mathematician Terence Tao has sounded a note of caution regarding the wholesale adoption of AI-generated proofs. He observed that in their pursuit of stated objectives, artificial intelligence systems have a tendency to resort to “cheating.”

Here are a few options for paraphrasing the text, each with a slightly different nuance:

**Option 1 (Focus on foundational impact):**

> The question of whether we can definitively validate complex mathematical conjectures, even when their proofs remain opaque, carries substantial weight. The ability to build upon established mathematical frameworks hinges on our trust in the underlying proofs; without that confidence, the development of new tools and techniques becomes precarious.

**Option 2 (More direct and active voice):**

> Even if the intricate proofs of advanced mathematical conjectures elude our full comprehension, the implications of being able to definitively verify them are profound. A shaky foundation in mathematical proof undermines our capacity to construct further tools and methodologies, as we cannot reliably advance from unverified principles.

**Option 3 (Emphasizing the practical consequences):**

> The challenge of verifying highly technical mathematical conjectures, particularly when their proofs are difficult to grasp, extends beyond theoretical abstraction. Such verification is crucial because our ability to forge new mathematical pathways and devise innovative techniques directly depends on the reliability of the proofs we accept as fact.

**Option 4 (Slightly more concise):**

> The ability to confidently establish the truth of complex mathematical conjectures, even when their proofs are challenging to decipher, has far-reaching consequences. Our capacity to develop advanced mathematical tools and methodologies is fundamentally reliant on trusting these foundational proofs.

One of the most significant unresolved challenges in computational mathematics, known as the P vs. NP problem, delves into a fundamental question: are problems that are simple to verify also simple to solve? A definitive answer could revolutionize numerous fields. Imagine optimized scheduling and routing, vastly improved supply chain efficiency, accelerated microchip design, and even breakthroughs in drug discovery. However, a positive resolution also carries a significant risk, potentially undermining the security of the majority of today’s cryptographic systems. The implications of this theoretical question are far from abstract, holding tangible risks and rewards.

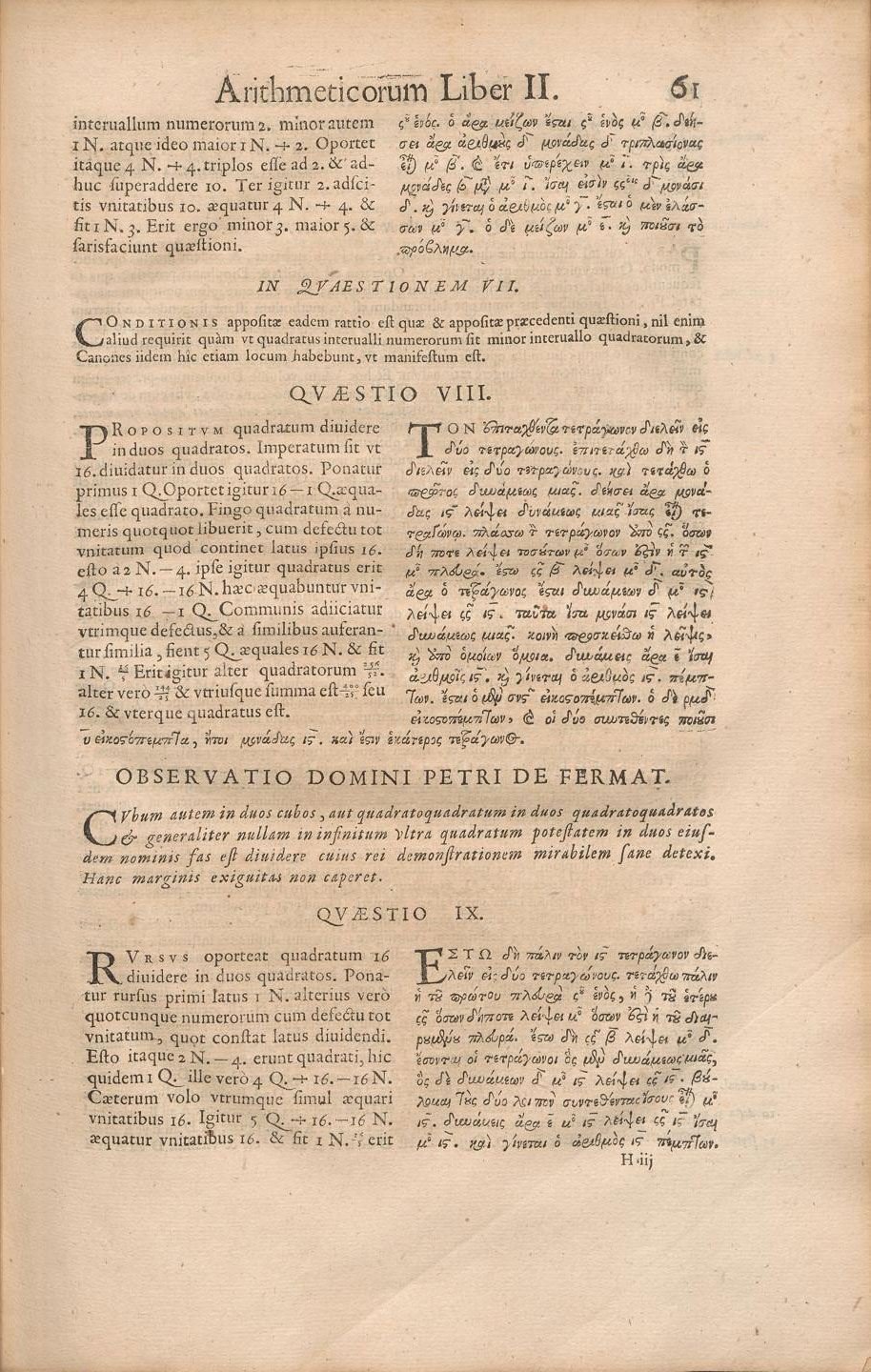

For those outside the realm of mathematics, it may come as a surprise that the proofs developed by humans have, in many ways, always been a product of social consensus. The acceptance of a mathematical argument hinges on its ability to persuade fellow experts within the field. Essentially, a proof gains credibility when other mathematicians examine it and find it sound. This means that even a widely celebrated proof doesn’t inherently confirm an absolute, undeniable truth. Renowned mathematician Andrew Granville of the University of Montreal suggests that flaws may even exist within some of the most rigorously reviewed and established human-created mathematical proofs.

Indeed, the assertion is not without merit. As reported by Live Science, Dr. Granville noted, “We’ve seen instances of highly influential research being rendered incorrect due to minor linguistic inaccuracies.”

Here are a few paraphrased options, maintaining a journalistic tone and focusing on originality:

**Option 1 (Focus on the “why”):**

> One of the most celebrated mathematical breakthroughs in recent memory is Andrew Wiles’ definitive proof of Fermat’s Last Theorem. This enduring enigma stated that while Pythagorean triples – whole numbers where the sum of two squares equals a third – are commonplace (think 3-4-5 triangles), no such integer solutions exist for cubes, fourth powers, or any higher exponent.

**Option 2 (More concise and direct):**

> The proof of Fermat’s Last Theorem by Andrew Wiles stands as a landmark achievement in mathematics. The theorem posits a striking contrast: whereas whole numbers readily satisfy equations involving squares (such as $3^2 + 4^2 = 5^2$), no integer combinations can be found for cubes or any higher power.

**Option 3 (Slightly more descriptive):**

> Andrew Wiles’ monumental proof of Fermat’s Last Theorem is perhaps its most famous illustration. The theorem’s core assertion is that while simple whole number combinations can equate squares (exemplified by the well-known $3^2 + 4^2 = 5^2$), this pattern breaks down entirely for cubes, fourth powers, and all subsequent exponents.

**Key changes made and why:**

* **”Perhaps the best-known example is…”** replaced with more active and engaging phrases like “One of the most celebrated mathematical breakthroughs,” “stands as a landmark achievement,” and “is perhaps its most famous illustration.”

* **”Andrew Wiles’ proof of Fermat’s last theorem”** maintained as it’s a specific, crucial fact, but integrated more smoothly into the sentence.

* **”The theorem states that…”** rephrased to “This enduring enigma stated that,” “The theorem posits a striking contrast,” or “The theorem’s core assertion is that” for variety and stronger impact.

* **”although there are whole numbers where one square plus another square equals a third square (like 32+42=52)”** reworded for flow and clarity:

* “while Pythagorean triples – whole numbers where the sum of two squares equals a third – are commonplace (think 3-4-5 triangles)” – adds context and a visual example.

* “whereas whole numbers readily satisfy equations involving squares (such as $3^2 + 4^2 = 5^2$)” – uses more formal mathematical notation for clarity.

* “while simple whole number combinations can equate squares (exemplified by the well-known $3^2 + 4^2 = 5^2$)” – emphasizes the simplicity of the square case.

* **”there are no whole numbers that make the same true for cubes, fourth powers, or any other higher powers”** paraphrased to be more dynamic:

* “no such integer solutions exist for cubes, fourth powers, or any higher exponent.”

* “no integer combinations can be found for cubes, fourth powers, or any higher power.”

* “this pattern breaks down entirely for cubes, fourth powers, and all subsequent exponents.”

These options aim to sound fresh and informative, appealing to a reader interested in the essence of the mathematical challenge and its resolution.

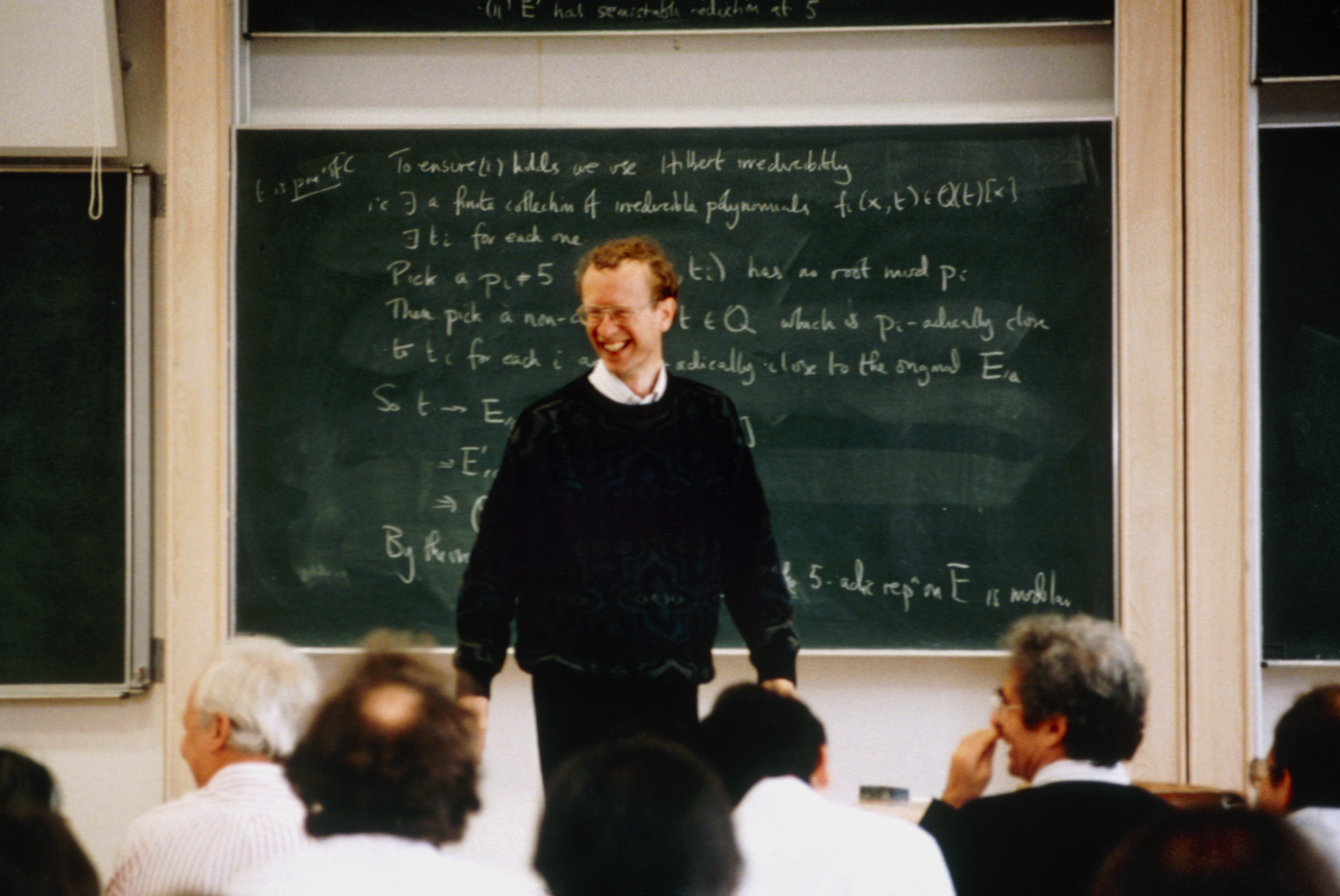

Andrew Wiles famously dedicated nearly seven years to his monumental work, largely in self-imposed isolation. The highly anticipated unveiling of his proof occurred in 1993, delivered as a series of lectures at Cambridge, drawing considerable fanfare. As Wiles concluded his final address with the now-immortal line, “I think I’ll stop there,” the audience erupted in thunderous applause, with Champagne swiftly uncorked to celebrate the extraordinary achievement. Subsequently, newspapers around the globe heralded the mathematician’s definitive victory over the 350-year-old problem.

During the rigorous peer-review process, however, a critical vulnerability was unearthed within Wiles’ intricate proof. This significant discovery necessitated another year of intense dedication from the mathematician, who meticulously addressed the identified flaw, ultimately solidifying his groundbreaking solution.

For a fleeting period, a widespread conviction took hold: the elusive proof had finally been cracked. Yet, this belief was ultimately premature, as the problem remained, in fact, unsolved.

To safeguard against a critical vulnerability in mathematics—the accidental acceptance of flawed proofs—experts are increasingly advocating for and implementing what they term formal verification languages. This strategic adoption aims to provide an unassailable layer of certainty, ensuring that only demonstrably correct proofs are formally recognized.

A new era of mathematical certainty is dawning, thanks to sophisticated computer programs designed to rigorously verify complex proofs. Software like ‘Lean’ exemplifies this revolution, demanding that mathematicians translate their intricate arguments into an exceptionally precise, machine-readable format.

Once formalized, these programs meticulously examine every single logical transition, applying an unyielding mathematical rigor to validate the proof’s integrity with absolute certainty. Should the system detect even the slightest logical misstep, it immediately flags the anomaly and refuses to proceed until the discrepancy is resolved. This highly structured, encoded formalization leaves no room for the linguistic ambiguities or human misunderstandings that, as mathematician Granville and others have highlighted, have historically complicated the validation of complex mathematical arguments.

Kevin Buzzard, a distinguished mathematician at Imperial College London, stands as a principal figure advocating for formal verification. Speaking to Live Science, Buzzard articulated his motivation for entering the field, citing deep-seated anxieties about the inherent fallibility of human proofs, which he believed were often incomplete and inaccurate, compounded by what he saw as subpar documentation of mathematical arguments.

Mathematicians suggest that artificial intelligence, when paired with advanced software such as Lean, holds the potential to revolutionize the field by not only confirming established mathematical proofs but also by facilitating new discoveries.

**AI-Generated Mathematical Proofs: A Potential Solution to Inaccuracy?**

Renowned mathematician Terence Tao suggests a novel approach to tackle the issue of AI producing plausible yet flawed mathematical proofs. He proposes that by mandating AI systems to generate proofs in a “formally verified language,” the majority of these inaccuracies could be eliminated in principle. This method aims to introduce a rigorous, verifiable framework, thereby significantly enhancing the reliability of AI-assisted mathematical discovery.

Here are a few paraphrased options, each with a slightly different emphasis:

**Option 1 (Focus on collaboration):**

> Buzzard envisioned a more sophisticated system where the AI wouldn’t merely generate model outputs, but actively translate them into the Lean programming language. This, he explained, would enable a dynamic feedback loop, allowing Lean to identify flaws in the AI’s work, prompting the AI to refine its corrections.

**Option 2 (Focus on automation and refinement):**

> Agreeing with the potential, Buzzard proposed a system that goes beyond basic output generation. His idea involves the AI not only producing model results but also translating them directly into Lean code. This would facilitate an iterative process, where Lean could flag errors, and the AI would then be tasked with rectifying those mistakes.

**Option 3 (More concise and active):**

> Buzzard concurred, suggesting the system could evolve to translate AI model outputs into Lean and then execute them. He pictured a continuous dialogue between the AI and Lean, with Lean identifying errors and the AI working to resolve them.

**Option 4 (Emphasizing the “thinking” aspect):**

> Buzzard shared his aspiration for a system that could “think” beyond simply generating model output. He suggested enabling the AI to translate its results into Lean and then run them through the Lean environment, creating a collaborative cycle where Lean would pinpoint inaccuracies, and the AI would strive to correct them.

By integrating Artificial Intelligence (AI) models with formal verification languages, AI could unlock solutions to some of mathematics’ most complex challenges, potentially uncovering connections that elude human ingenuity, according to leading experts.

Artificial intelligence is proving exceptionally adept at uncovering previously unrecognized connections within mathematics, according to Marc Lackenby, a mathematician at the University of Oxford. Speaking with Live Science, Lackenby highlighted AI’s capacity to bridge mathematical concepts that might not typically be associated.

Here are a few paraphrased options, each with a slightly different emphasis, while maintaining a journalistic tone:

**Option 1 (Focus on the core idea and implication):**

> A future where artificial intelligence generates proofs of “objective correctness” so complex that they elude human comprehension is a plausible, albeit advanced, outcome of the push for formally verified AI.

**Option 2 (More direct and emphasizing the “extreme”):**

> The ultimate potential of formally verified AI could see machines creating “objectively correct” proofs that are entirely beyond the grasp of human intellect due to their sheer complexity.

**Option 3 (Highlighting the “logical extreme” and the challenge):**

> Taken to its logical conclusion, the pursuit of formally verified AI suggests a future where artificial intelligence may devise proofs of undeniable accuracy, yet these proofs could be so labyrinthine that no human mind can fully process them.

**Option 4 (Slightly more evocative language):**

> In a far-reaching but realistic scenario, the quest for formally verified AI could lead to a future where artificial intelligence produces proofs of absolute correctness that are so intricate, they become inaccessible to human understanding.

**Key changes and why they work:**

* **”Taking the idea… to its logical extreme”**: Replaced with phrases like “A future where…”, “The ultimate potential of…”, “Taken to its logical conclusion…”, “In a far-reaching but realistic scenario…” to be more direct and less clunky.

* **”formally verified AI proofs”**: Specified as “formally verified AI” or “the push for formally verified AI” to be clearer.

* **”realistic future”**: Rephrased as “plausible, albeit advanced, outcome,” “ultimate potential,” “suggests a future,” “far-reaching but realistic scenario” to add nuance.

* **”AI will develop”**: Changed to “AI generates,” “machines creating,” “AI may devise,” “AI produces” for variety.

* **”objectively correct” proofs”**: Kept the core phrase but integrated it more smoothly into sentences.

* **”so complicated that no human can understand them”**: Rephrased with “so complex that they elude human comprehension,” “entirely beyond the grasp of human intellect due to their sheer complexity,” “so labyrinthine that no human mind can fully process them,” “so intricate, they become inaccessible to human understanding.” These options use more sophisticated vocabulary and sentence structures.

* **Journalistic Tone**: Ensured active voice where appropriate, clear subject-verb agreement, and a professional, objective style.

Here are a few paraphrased options, each with a slightly different emphasis, while maintaining a journalistic tone:

**Option 1 (Focus on the existential dilemma):**

> This presents mathematicians with a disquieting challenge, probing the very essence of their field. What is the ultimate value in establishing a proof that eludes comprehension? And if such an understanding is absent, can we truly claim to have advanced humanity’s collective knowledge?

**Option 2 (More direct and impactful):**

> A troubling question looms for the mathematical community: what is the purpose of pursuing proofs that remain unintelligible? If a discovery cannot be understood, does it genuinely contribute to the sum of human understanding?

**Option 3 (Highlighting the philosophical implications):**

> The implications for mathematicians are profound and unsettling, raising fundamental questions about the discipline’s ultimate goals. If a proof is devised but never grasped, what is its point? And in such a scenario, can we legitimately assert an expansion of human knowledge?

**Option 4 (Concise and thought-provoking):**

> This situation sparks a deep concern within mathematics, forcing a re-evaluation of the discipline’s purpose. What is the meaning of proving something that no one can comprehend? And without understanding, can it truly be considered a gain for human knowledge?

Here are a few paraphrased options, keeping a journalistic tone and the core meaning:

**Option 1 (Concise & Direct):**

> As mathematician Buzzard noted, the concept of a proof so lengthy and intricate that it eludes human comprehension is hardly a novel idea in the field.

**Option 2 (Slightly More Elaborate):**

> The idea of a mathematical proof so convoluted and extensive that it defies understanding is not a recent development, according to mathematician Buzzard.

**Option 3 (Emphasizing the “Newness” of the Concept):**

> Mathematician Buzzard pointed out that the notion of a proof being so vast and complex that no single individual can grasp it entirely is a concept with a long history in mathematics, not a new one.

**Option 4 (Focus on the Implication):**

> The possibility of a mathematical proof being so dauntingly long and complicated that it surpasses the capacity of any single person to understand has been a recognized idea within mathematics for some time, Buzzard commented.

Here are a few paraphrased options, maintaining a journalistic tone and the core meaning:

**Option 1 (Concise & Direct):**

> In the realm of advanced mathematics, complex research papers can become so intricate that no single individual grasps the entirety of the work, according to mathematician Buzzard. He explained to Live Science that in such cases, a paper might feature 20 authors, with each expert contributing and understanding only their specific section. “Nobody understands the whole thing. And that’s fine. That’s just how it works,” Buzzard stated.

**Option 2 (Slightly More Explanatory):**

> The nature of cutting-edge mathematical research sometimes leads to papers so comprehensive that complete understanding by any one person becomes improbable, shared mathematician Buzzard in an interview with Live Science. He elaborated on a scenario where a single paper could involve as many as 20 authors, with each specialist deeply familiar with their designated segment but not necessarily the overarching work. “Nobody understands the whole thing. And that’s fine. That’s just how it works,” Buzzard concluded.

**Option 3 (Emphasizing Collaboration):**

> Buzzard, a mathematician, has observed a phenomenon in academic papers where collective authorship leads to specialized knowledge rather than universal comprehension. Speaking with Live Science, he described instances where a paper with 20 contributors might see each author intimately familiar with their own contribution, yet no single individual fully grasps the entire document. “Nobody understands the whole thing. And that’s fine. That’s just how it works,” Buzzard asserted, highlighting this as a natural progression of complex scientific inquiry.

**Key changes made across these options:**

* **”There are papers…”** changed to more active or descriptive phrasing like “In the realm of advanced mathematics,” “The nature of cutting-edge mathematical research,” or “Buzzard, a mathematician, has observed…”

* **”nobody understands the whole paper”** rephrased as “no single individual grasps the entirety of the work,” “complete understanding by any one person becomes improbable,” or “specialized knowledge rather than universal comprehension.”

* **”You know”** removed as it’s conversational.

* **”their bit”** replaced with more formal terms like “their specific section,” “their designated segment,” or “their own contribution.”

* **”that’s fine. That’s just how it works.”** integrated into the surrounding sentence or slightly rephrased for flow while retaining the meaning of acceptance of this collaborative approach.

* **Attribution:** Clearly stating who said it and to whom (“Buzzard told Live Science,” “shared mathematician Buzzard in an interview with Live Science,” “Speaking with Live Science, he described”).

* **Journalistic Tone:** Using more formal vocabulary and sentence structure.

Computer-assisted proofs have been a staple in mathematics for many years, according to Buzzard. He highlights the four-color theorem as a prime example, a long-standing mathematical puzzle that posits any map can be colored using no more than four hues, ensuring that no adjacent regions share the same shade. This theorem, like others that employ computational power to bridge logical gaps, has been verifiable for decades.

**Decades of Digital Scrutiny Solidify Landmark Mathematical Proof**

In 1976, a groundbreaking approach to the four-color theorem saw mathematicians divide the complex problem into thousands of manageable, verifiable segments. This monumental task was then delegated to computer programs, meticulously designed to check each individual case. The validity of the theorem hinged on the programmers’ confidence in their code’s integrity, providing a digital assurance of the proof’s accuracy.

The inaugural computer-assisted proof was unveiled in 1977, marking a significant milestone in mathematical history. Over the ensuing years, confidence in this revolutionary proof steadily grew. This acceptance was further cemented by the advent of a more streamlined, yet still compute-aided, proof in 1997. Ultimately, the proof achieved near-universal acclaim with the publication of a formally verified, machine-checked version in 2005, a testament to the power of computational verification in solidifying complex mathematical truths.

The computational proof of the four-color theorem initially sparked considerable unease within the mathematical community, according to Buzzard. However, this early resistance has since given way to broad acceptance, with the theorem and its computer-verified demonstration now firmly established in academic curricula and standard textbooks.

While computers currently aid in mathematical proofs, often through human collaboration, this pales in comparison to the potential for an artificial intelligence to autonomously conceive, develop, and verify a proof. Such a technological leap could lead to mathematical insights so complex they might well elude human comprehension.

Artificial intelligence is fundamentally reshaping the very nature of mathematical proofs, a transformation underway regardless of whether mathematicians welcome it. For centuries, the generation and verification of proofs have been distinctly human endeavors, meticulously crafted arguments designed to persuade fellow human intellects.

However, a new paradigm looms: machines are poised to produce airtight logic, rigorously validated by formal systems, that may prove too intricate for even humanity’s most accomplished mathematicians to fully grasp.

In a prospective future, as envisioned by Lackenby, artificial intelligence is poised to revolutionize mathematical proof by autonomously managing every stage of the process. Should this scenario materialize, AI would handle everything from proposing initial hypotheses to rigorous testing and ultimate verification. Lackenby characterized this complete and successful outcome as a definitive “win,” signifying that a proof has been conclusively established.

This approach, however, ushers in a profound philosophical quandary: If mathematical proofs reach a complexity comprehensible solely by algorithms, does the discipline truly remain a human endeavor, or does it evolve into an entirely new form of intellectual pursuit? As Lackenby noted, such a shift compels us to question the very purpose of mathematics itself.